Scarcely a day goes by right now without a breathless newspaper headline about how artificial intelligence (AI) is going turn us all into superhumans, if it doesn’t end up replacing us first. But what do we really mean by AI, and what could we do with it? In this post I’ll take a look at the state of the art, and how you could build your own Do-It-Yourself “seeing eye” AI using a cheap Raspberry Pi computer and some free software from Google called TensorFlow.

If you want to have a go at doing this yourself, I’m following a brilliant step by step guide produced by Libby Miller from BBC R&D. I should also note that this is possible because of Sam Abrahams, who got TensorFlow working on the Raspberry Pi, and also literally wrote the book on TensorFlow.

Raspberry Pi logo screen printed onto motherboard

AI right now is mainly focussed pattern recognition – in still and moving images, but also in sounds (recognising words and sentences) and text (this text is written in English). A good example would be the Raspberry Pi logo in the picture above. Even thought it’s a little blurred and we can’t see the whole thing, most people would recognise that the picture included some kind of berry. People who were familiar with the diminutive low cost computer would be able to identify that the picture included the Raspberry Pi logo almost instantly – and the circuit board background might help to jog their memory.

While we talk about “intelligence”, the truth is that this pattern recognition is pretty dumb. The intelligence, if there is any, is supplied by a human being adding some rules that tell the computer what patterns to look out for and what to do when it matches a pattern. So let’s try a little experiment – we’ll attach a camera and a speaker to our Raspberry Pi, teach it to recognise the objects that the camera sees, and tell us what it’s looking at. This is a very slow and clunky low tech version of the OrCam, a new invention helping blind and partially sighted people to live independently.

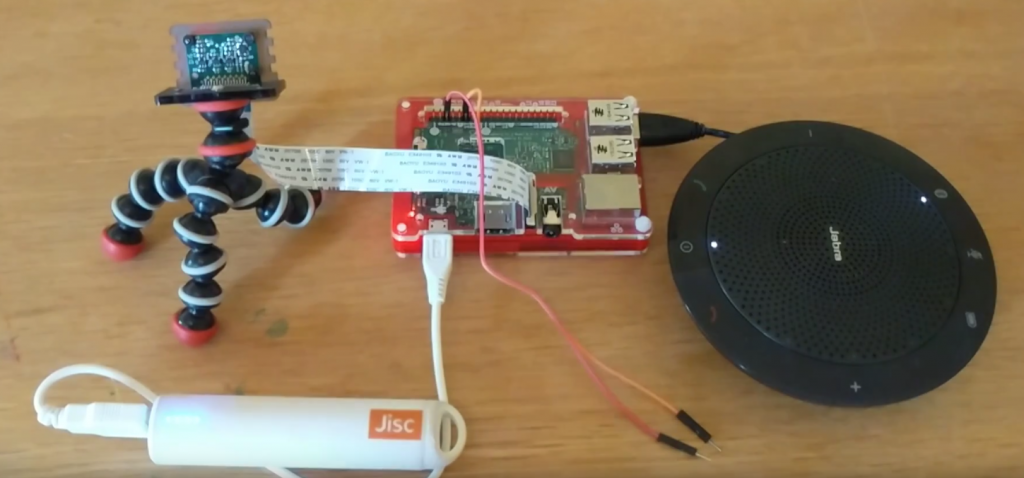

Our Raspberry Pi powered “seeing eye” AI

The Raspberry Pi uses very little electricity, so you can actually run it off a battery, although it’s not as portable or as sleek as the OrCam! And rather than a speaker, you could simply plug a pair of headphones in – but the speaker makes my demo video work better. I used a Raspberry Pi model 3 (£25) and an official Raspberry Pi camera (£29). If you’re wondering what the wires are for, this is my cheap and cheerful substitute for a shutter release button for the camera, using the Raspberry Pi’s General Purpose Input Output (GPIO) connector. GPIO makes it easy to connect all kinds of hardware and expansion boards to your Raspberry Pi.

So that’s the hardware – what about the software? That’s the real brains of our AI…

Google’s TensorFlow is an open source machine learning system. Machine learning is the technology that underpins most modern AI systems, and it’s responsible for the pattern recognition I was talking about just now. Google took the bold step of not just making TensorFlow freely available, but also giving everyone access to the source code of the software by making it “open source”. This means that developers all over the world can (and do) enhance it and share their changes.

The catch with machine learning is that you need to feed your AI lots of example data before it’s able to successfully carry out that pattern recognition I was talking about. Imagine that you are working with a self-driving car – before it can be reasonably sure what a cat running out in front of the car looks like, the AI will need training. You would typically do this by showing it lots of pictures of cats running out in front of cars, maybe gathered during your human driver assisted test runs. You’d also show it lots of pictures of other things that it might encounter which aren’t cats, and tell it which pictures are the ones with cats in. Under the hood, TensorFlow builds a “neural network” which is a crude simulation of the way that our own brains work.

So let’s give our Raspberry Pi and TensorFlow powered AI a spin – watch my video below:

Now for a confession – I didn’t actually sit down for hours teaching it myself. Instead I used a ready-made TensorFlow model that Google have trained using ImageNet, a free database of over 14 million images. It would take a long time to build this model on the Raspberry Pi itself, because it isn’t very powerful. If you wanted to create a complex model of your own and don’t have access to a supercomputer, you can rent computers from the likes of Google, Microsoft and Amazon to do the work for you.

So now you’ve seen my “seeing eye” AI, what would you use TensorFlow for? Why not leave a comment and let me know…