An estimated 30 million smart speakers have been sold in the United States alone, with devices like Google Home and Amazon Echo (“Alexa”) nestling in the corner of many living rooms, kitchens and yes – even bedrooms. Amazon are betting big that you’ll want one in every room, even selling the Echo Dot in a six pack and twelve pack.

The digital assistants in these smart speakers seem like something from a science fiction movie – you can ask Alexa everything from general knowledge questions to very specific things like getting a weather forecast or checking to see if there are likely to be any delays on your commute into work. But how do they really work, and how might you go about teaching a digital assistant like Alexa some new tricks?

Amazon Echo – photo CC BY-NC-ND Flickr user michaeljzealot

In this post I’ll look at how to create a new “skill” for Alexa, and hopefully demystify the technology a little bit.

At Jisc we operate dozens of services, for around 18 million people – from teachers and learners to researchers and administrators. Why log a call about a product or service when you could just ask Alexa? Maybe you could get your question answered there and then, from the information that we already have in our systems?

I thought it might be fun to work up a few practical examples, for instance:

- I’m a network manager or IT director. What’s the status of the Janet network? – are there any connections down? Our Janet status page and Netsight service has this info

- I’m a researcher writing a new paper. What’s this journal’s open access policy? Our SHERPA services let you conveniently find out about publisher and funder OA policies

- I’m an administrator reviewing a grant application before submission. Do we really need to build a hyperbaric chamber, or is there another institution nearby that has one? Our equipment.data service lets you find kit that institutions are sharing with each other and industry

What would that look (and sound) like? Here’s a short video that my daughter and I made to demo our prototype Alexa skill for Jisc:

The Alexa digital assistant is the Amazon product that underlies all of the Echo products, and we are also starting to see Alexa appear in third party products like the recently announced Sonos One speakers. If you don’t like saying Alexa, or perhaps someone in your house has a similar name, you can call it computer instead – very Star Trek!

Amazon provide developers a set of tools for building new Alexa applications. I’ll give you a quick overview here, but as you can imagine there is quite a lot of detail for those who want to take a deep dive into all things Alexa and Echo related.

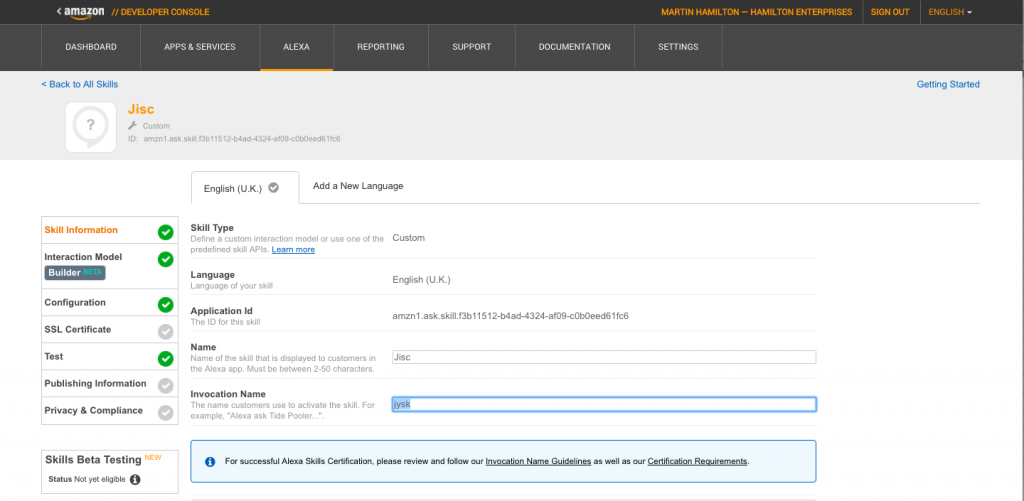

First off, you have to tell Amazon what your new Alexa skill will be called – it needs to have a distinctive name because there are already over 15,000 skills out there. It turned out that Jisc doesn’t work as our skill’s name, and I had to resort to a phonetic spelling of our company’s name instead – Jysk. Once you find the right word or words to invoke the skill, people will be able to say Alexa, ask Jisc – or rather, Jysk!

Defining the Jisc (“Jysk”) Alexa skill

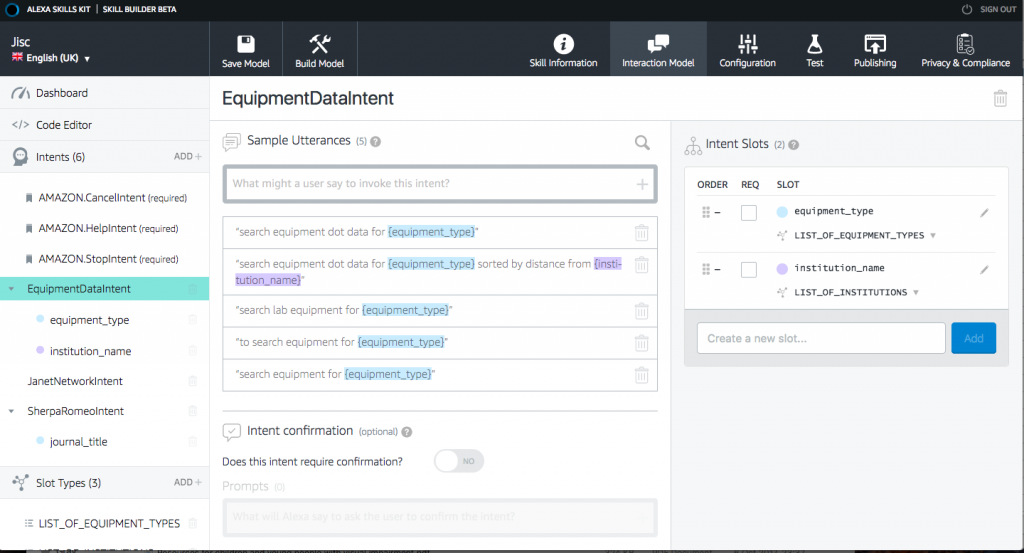

Secondly, you need to tell Amazon what kinds of questions people will be asking of your skill. This is where the magic and mystery of Alexa starts to unravel a little. It turns out that you have to be pretty precise about the wording of the phrases that you want Alexa to respond to, although there is a little wiggle room. For instance, if we tell Alexa to respond to questions about janet network status, it will also recognise that status of the janet network is the same question worded slightly differently.

Sample utterances for equipment.data search

Thirdly, we need to tell Alexa how to find out the answer to the question. If it’s a simple question like janet network status, then this isn’t actually too hard either – we just need a place that we can go to for the requested info. And if it’s already available on the web somewhere, then we can even copy the info off the webpage without having to set up some kind of complicated database connection or Application Programming Interface (API).

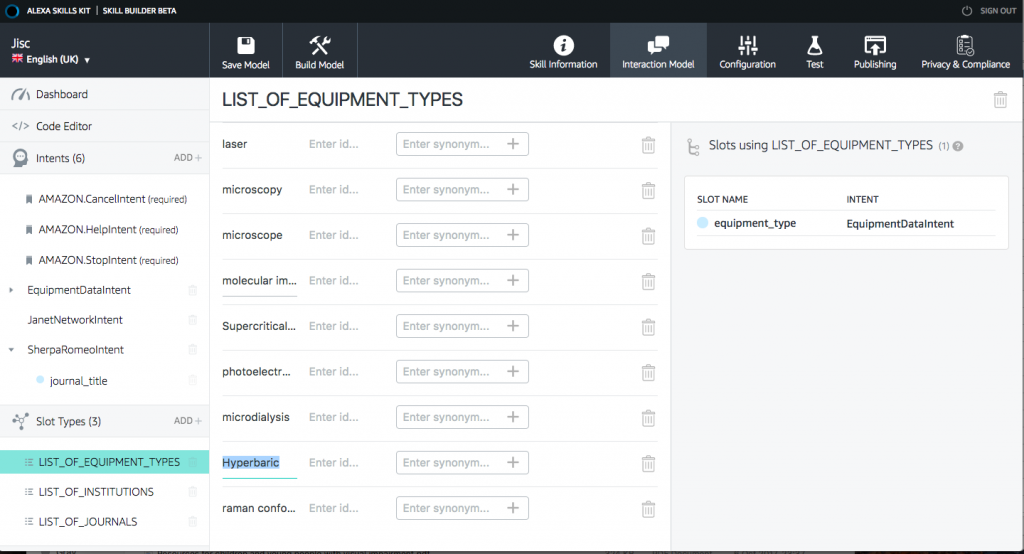

Slot values for equipment.data search

If our question has parameters, things do get a bit trickier – and this is where the final bit of mystique evaporates. Alexa doesn’t and can’t know all of the possible journal names or pieces of equipment that we might want to ask it about. Instead, when the skill is created, we tell it what the possible parameters are for the question. Amazon call these “slots”. When we’re asking about journal names we might include Nature, Computer Networks and so on in our slots. When we’re asking about equipment, our slots might contain mass spectrometer, spectroscope, hyperbaric chamber, and so on.

So where does Alexa go to get the answers to these questions? The answer is that it makes a simple HTTP request to a web server somewhere. This can be any server, but Amazon are quite keen for you to use their new Lambda system, which lets you run code on demand without the overheads of running (securing, patching etc…) a regular server. Lambda is a whole story in itself, and for demo purposes I’ve simply pointed Alexa at an existing Jisc test server.

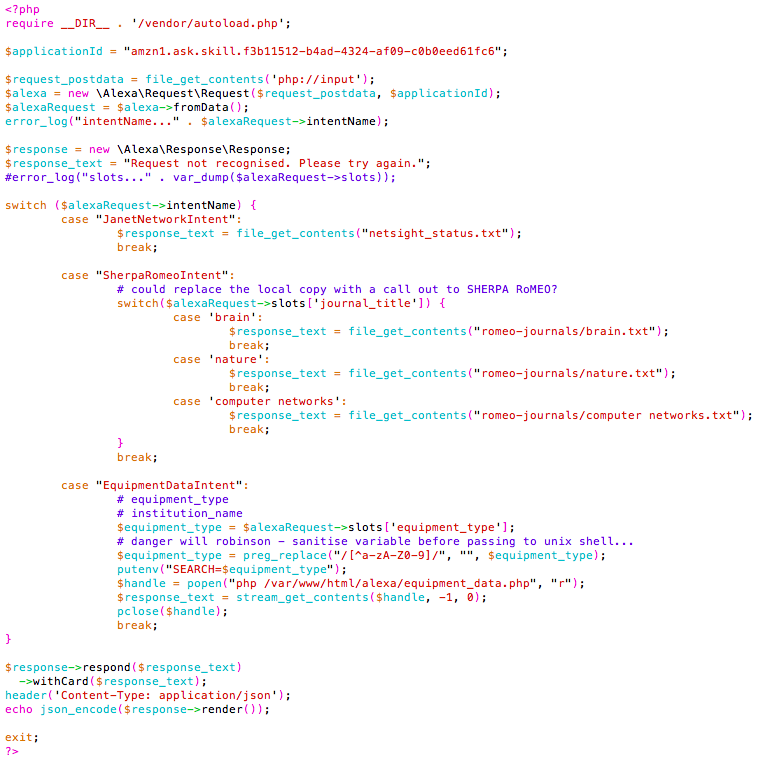

What does the code look like to process a request from Alexa? Pretty simple, actually. Here’s the actual code that I use to make the Jisc (Jysk!) Alexa skill work…

Alexa PHP sample code for the Jysk intent

Let’s spend a moment unpacking this – we’re using the Amazon Alexa PHP Library to process the incoming request. This makes an Alexa request object that contains the question and (if appropriate) the slot that Amazon think we were asking about. We can then decide what to do with the request. For the sample Jisc skill we fetch the Janet network status or journal policy information from a file that has already been populated separately, and for the equipment database lookup we go off to run an external program. Any external dependencies have to be pretty seamless, otherwise the user will be left waiting for ages wondering what is going on.

It’s important to note here that you don’t have to release your work-in-progress Alexa skill to the world until you are ready – in the Alexa console you can specify that the skill only works on Alexa devices linked to your own Amazon account, which is probably best for testing. You can also simulate interaction with an end user to test your back end code independently of Alexa’s speech recognition.

Sometimes it’s easier to see things rather than read about them, so I’ve made a short video that walks you through the Alexa developer console and shows you how this all fits together:

So now you know how to make your own Alexa skills. What will you make? Why not leave a comment and let me know!